ebpmf.alpha_v0.3.9_summary

zihao12

2020-05-19

Last updated: 2020-05-19

Checks: 7 0

Knit directory: ebpmf_data_analysis/

This reproducible R Markdown analysis was created with workflowr (version 1.6.2). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20200511) was run prior to running the code in the R Markdown file. Setting a seed ensures that any results that rely on randomness, e.g. subsampling or permutations, are reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great job! Using relative paths to the files within your workflowr project makes it easier to run your code on other machines.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility.

The results in this page were generated with repository version dc51799. See the Past versions tab to see a history of the changes made to the R Markdown and HTML files.

Note that you need to be careful to ensure that all relevant files for the analysis have been committed to Git prior to generating the results (you can use wflow_publish or wflow_git_commit). workflowr only checks the R Markdown file, but you know if there are other scripts or data files that it depends on. Below is the status of the Git repository when the results were generated:

Ignored files:

Ignored: .Rhistory

Ignored: .Rproj.user/

Ignored: analysis/ebpmf_bg_tutorial_cache/

Untracked files:

Untracked: analysis/compare_LF.R

Untracked: analysis/plot_topic_words.R

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the repository in which changes were made to the R Markdown (analysis/ebpmf.alpha_v0.3.9_summary.Rmd) and HTML (docs/ebpmf.alpha_v0.3.9_summary.html) files. If you’ve configured a remote Git repository (see ?wflow_git_remote), click on the hyperlinks in the table below to view the files as they were in that past version.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | dc51799 | zihao12 | 2020-05-19 | add todo to v0.3.9 summary |

| html | 47d0686 | zihao12 | 2020-05-19 | Build site. |

| Rmd | c54e018 | zihao12 | 2020-05-19 | v0.3.9 summary |

| html | 8d1d007 | zihao12 | 2020-05-19 | Build site. |

| Rmd | e53c1c4 | zihao12 | 2020-05-19 | v0.3.9 summary |

Model and implementation details:

Individual data analysis results:

some observations

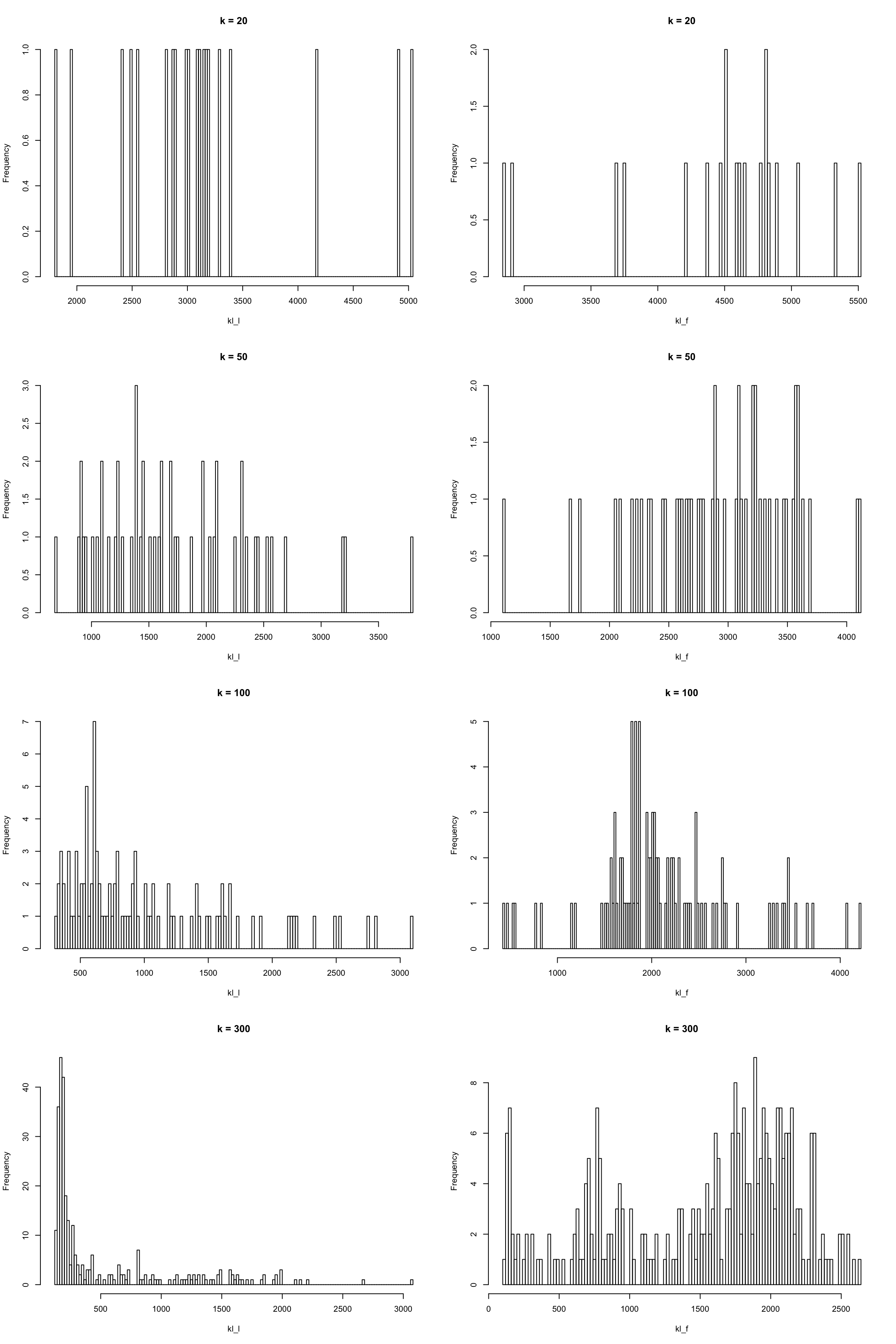

- \(g_L\) ’s components have large \(\phi_l\) (\(100\)), \(g_F\) has components with both large (\(100\)) and small (\(0.01\)) \(\phi_l\).

bgmodel can identify rare but important words for a topic. (although they are not very easy to understand sometimes… may need better dataset?)

- The fitted background is quite different from the rank-1 fit, and the row-wise median from PMF fit.

More comparisons across different \(K\)

Note that

\[ELBO = E_q(\text{log-lik}) - KL(q || g)\].

K ELBO KL E_ll ll_pmf

1 20 -1700057 151380.3 -1548677 -1504099

2 50 -1688549 232036.2 -1456512 -1379746

3 100 -1702420 313105.3 -1389315 -1264891

4 300 -1847818 608624.9 -1239193 -1038952For a large \(K\), we should expect optimal soultion to have ELBO no less than small \(K\): we can just make \(q, g\) to be point-mass at 0 for extra topics to ensure equal ELBO. But how can we achieve them? If we can do that, the algorithm can choose automatically \(K\).

(say \(K = 50\) is optimal, and we use \(K = 60\). we might initialize the last 20 to be point mass at 0. but the bg structure make it hard…)

TODO:

As suggested by Matthew:

add \(w_k\) to the model

\[\begin{align} & X_{ij} \sim Pois(l_{i0} f_{j0} \sum_k w_k l_{ik} f_{jk})\\ & l_{ik} \sim g_{L, k}(.), f_{jk} \sim g_{F, k}(.) \\ & \sum_{i} l_{i0} = \sum_j f_{j0} = 1 \end{align}\]where \(g\) are mixtures of gamma, with mean 1 and different variances.

We use \(w_k\) to account for the inbalance of topics.

think about rank-1 update and automatically choose \(K\)

Analogous to the greedy algorithm (rank-1 update) in flashr, we want to do similar things in our model.

how to pick key words for each topic

We already see that we can learn some rare and important key words from \(\bar{f}_{jk}\) that we can’t from \(f_{j0}\bar{f}_{jk}\). Peter has some ways to find ways to find key words so it wold be great to apply them here.

find a better dataset

Some issue with the kos dataset: some words are not truncated properly (particularly names); does not include common and non-informative words like “a”, “the” (which would allow us to compare with common but informative words)

overfitting?

IN \(g_F\), most topics have around \(80\) percent of weight on large \(\phi_l\), which shrinks the enrichment of most words to almost 0. For a very small number in \(f_{j0}\bar{f}_{jk}\), which is more suitable: a small background or very little enrichment?

R version 3.5.1 (2018-07-02)

Platform: x86_64-apple-darwin15.6.0 (64-bit)

Running under: macOS 10.14

Matrix products: default

BLAS: /Library/Frameworks/R.framework/Versions/3.5/Resources/lib/libRblas.0.dylib

LAPACK: /Library/Frameworks/R.framework/Versions/3.5/Resources/lib/libRlapack.dylib

locale:

[1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

attached base packages:

[1] stats graphics grDevices utils datasets methods base

loaded via a namespace (and not attached):

[1] workflowr_1.6.2 Rcpp_1.0.2 rprojroot_1.3-2 digest_0.6.22

[5] later_0.8.0 R6_2.4.0 backports_1.1.5 git2r_0.26.1

[9] magrittr_1.5 evaluate_0.14 stringi_1.4.3 fs_1.3.1

[13] promises_1.0.1 whisker_0.3-2 rmarkdown_2.1 tools_3.5.1

[17] stringr_1.4.0 glue_1.3.1 httpuv_1.5.1 xfun_0.8

[21] yaml_2.2.0 compiler_3.5.1 htmltools_0.3.6 knitr_1.28